By Gyeyok Haruna, Data Management Executive, British Airways Plc

I have spent years working at the intersection of data, governance and organisational change. And one thing I hear consistently across industries is the same mix of excitement and uncertainty about AI. People want to move fast. They want to innovate. They want to show progress. But the path ahead is not always clear.

It often feels like driving in fog. The road is there. The destination is real. But the visibility is low. You can feel the speed, but you cannot always see the risks ahead.

The numbers reflect this. Recent research shows that AI adoption is outpacing governance in almost seventy percent of organisations. That gap is where things start to slip. It is where rushed decisions happen, where models drift quietly in the background, and where teams end up reacting instead of leading.

I see this pattern across sectors. The tools get better. The pressure to deliver grows. The excitement builds. But the structures that keep everything safe and aligned do not always grow at the same pace. When that happens, organisations lose control of the wheel without even realising it.

The good news is that it does not have to be this way.

Governance is not the brake. It is the gearbox. When it works well, it helps organisations move with clarity, confidence and direction, accelerating safely rather than stopping dead.

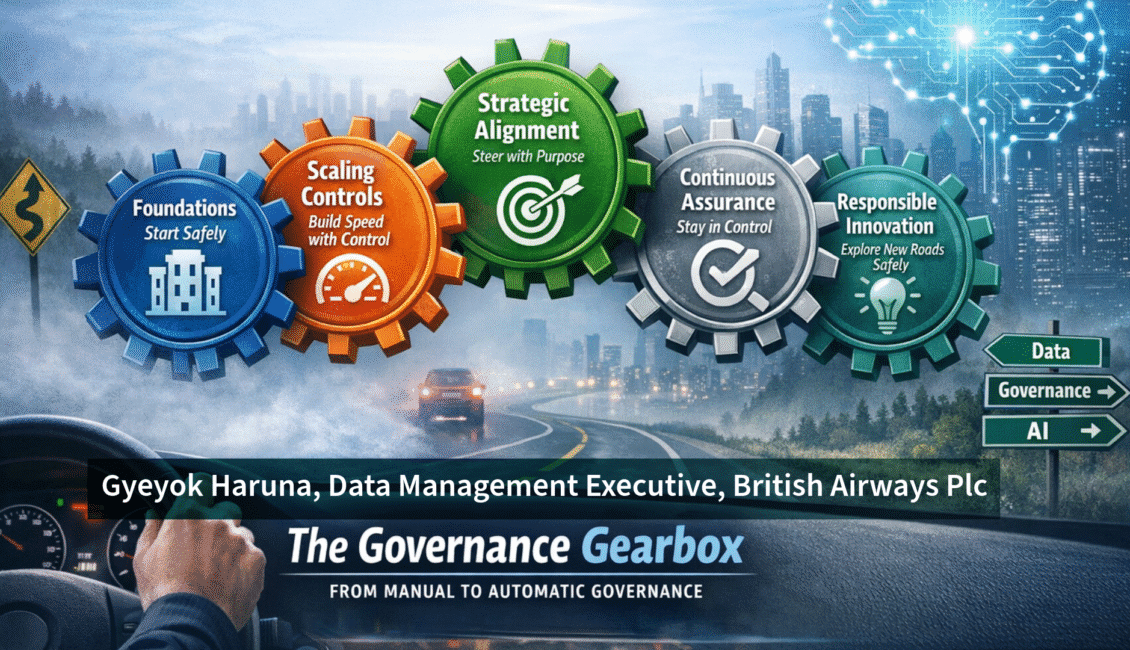

The Governance Gearbox

This is why I developed the Governance Gearbox: a simple, practical framework to help organisations shift from uncertainty to control, from experimentation to scale, and from reactive oversight to strategic leadership. It is built on five gears that work together as a system, not as separate steps, but as connected elements that keep AI adoption safe, steady and aligned with real business value.

A single gear spinning alone does nothing. The value comes from how they connect.

Gear 1: Foundations – Start Safely

Every journey begins with getting the basics right. Foundations are the starting point: clear definitions, clear roles, clear expectations, and a shared understanding of what AI is allowed to do and what it is not.

In my experience, most AI issues do not stem from the technology itself. They come from unclear definitions, unclear ownership, and unclear expectations. I have seen how much difference it makes when organisations simply agree on what counts as a high-risk use case, or who is accountable for what decision. It removes friction. It removes guesswork. It gives people the confidence to move forward.

Starting safely sets the tone for everything that follows.

Gear 2: Scaling Controls – Build Speed with Control

Once the foundations are in place, organisations want to build momentum. But speed without control is risky. Scaling controls help teams move faster without losing safety, and critically, they should match the level of risk, not apply blanket friction to everything.

A small model with low organisational impact should have a light-touch check. A complex model that affects people or critical operations deserves a deeper review. When controls scale with complexity, teams can accelerate with confidence. It is the difference between accelerating safely and accelerating blindly.

Gear 3: Strategic Alignment – Steer with Purpose

This is the gear that turns governance into a strategic partner rather than an obstacle. AI work should connect to real business priorities, solve real problems, and support organisational goals, not sit to the side as a technical experiment.

When governance is linked to strategy, teams stop chasing exciting ideas and start focusing on what actually matters. I have seen brilliant models that nobody used not because they did not work, but because they were not solving a problem anyone needed solved. It is like building a fast car and forgetting to check whether anyone needs to go where it is headed. Steering with purpose keeps the organisation on the right road, not just the fastest one.

Gear 4: Continuous Assurance – Stay in Control as Conditions Change

AI does not stay the same. It changes as data changes. It behaves differently as conditions shift. Continuous assurance keeps everything safe over time through monitoring, traceability, and clear escalation paths when something goes wrong.

The organisations I have seen handle this well treat monitoring as part of the workflow from the start, not as an afterthought. It builds trust. It reduces surprises. It helps teams catch issues early before they become real problems. Staying in control is not about perfection. It is about awareness and readiness.

Gear 5: Responsible Innovation – Explore New Roads Safely

This is my favourite gear because it is not about saying no to new ideas. It is about saying yes, carefully. Responsible innovation creates space for experimentation with boundaries. It encourages creativity while protecting people and the organisation.

This is where human oversight matters most. Where explainability builds trust. Where teams learn from pilots before scaling. When innovation is guided rather than restricted, organisations can explore new ground with confidence, trying new ideas without risking the journey.

A Practical Example: Workforce Scheduling

One use case that brings the gearbox to life is workforce scheduling, a scenario familiar across many industries. An AI model predicts staffing needs and matches people to tasks. It sounds simple, but it touches fairness, transparency and trust.

Here is how all five gears show up in this one use case:

Foundations help teams agree on what fairness actually means in a scheduling context; what data is used, what constraints must be respected, and what is off-limits.

Scaling controls ensure that high-impact scheduling decisions get the right level of human review before being applied at scale.

Strategic alignment keeps the model focused on real outcomes, reducing burnout, improving service levels, and managing peak demand rather than optimising for the wrong metric.

Continuous assurance monitors for drift, catching cases where certain groups are being overallocated or where patterns are shifting in ways that were not intended.

Responsible innovation means the model was piloted with human oversight and clear override capabilities before it was ever scaled across the organisation.

This is how the gears work together, not as separate steps, but as a system that keeps everything steady as the organisation moves forward.

From Manual to Automatic

When organisations first begin their AI journey, everything feels manual. Every decision needs attention. Every risk needs discussion. Every step feels deliberate. It is like learning to drive: you think about every movement, check every mirror, feel every shift.

But as the gears settle in, something changes. The foundations hold. The controls scale. The alignment becomes natural. The monitoring becomes routine. Innovation becomes guided rather than risky.

And at that point, governance stops feeling like a manual gearbox. It becomes automatic. It becomes part of the engine. It becomes the way the organisation moves.

That is the real goal. Not more rules. Not more friction. But a system that keeps everyone safe while helping the organisation accelerate with clarity and purpose.

AI is not slowing down. The fog is not lifting anytime soon. But with the right gears in place, organisations can drive forward with confidence, not in spite of governance, but because of it.

Which gear feels weakest in your organisation right now?

I would love to hear where you are on this journey. Connect with me on LinkedIn or come find me at the conference. I will be at the IRM UK breakout session on 23 March 2026.

About the author: Gyeyok Haruna is a Data Management Executive at British Airways Plc, specialising in data governance, data quality and AI governance. She spoke at the IRM UK Data Governance, AI & MDM Conference Europe in London, March 2026. Connect with her on LinkedIn: linkedin.com/in/gyeyok-haruna